Wednesday, 10 June

18:21

Larson: Are insecure code completions a vulnerability? [LWN.net]

Seth Larson, the Python Software Foundation's security developer-in-residence, has written about the difficulty in classifying insecure code completion in the PyCharm IDE using its Full Line code completion plugin. Larson discovered that the plugin, which uses a local "deep learning module" to offer code completions, suggests code that would lead to severe vulnerabilities. He was unsure whether it warranted a CVE or not, however:

I reported this behavior to JetBrains for "Full Line Code Completion" v253.29346.142 and clearly their support staff weren't certain whether this defect was a security vulnerability or not either. When I asked to publish a blog post about this behavior after they confirmed this report wasn't a "direct security vulnerability" (which I agree with) but then was asked not to publicize my report and referred to PyCharm's Coordinated Disclosure Policy so... which is it? Security vulnerability or not?

I ended up waiting the 90 days anyway and I didn't hear back with any substantive update from the development team. I double-checked again today using "Full Line Code Completion" v261.24374.152 and the behavior is identical, suggesting the same insecure code for both contexts.

This isn't meant to be a specific dig at PyCharm or JetBrains, I have no-doubt that examples like this exist in every code generation model available.

17:14

Today's song: It's Your Thing. If the web had a song this could be it.

Every editor should have cute-paste.

Some days Claude is great, the best collaborative programmer I've ever worked with, and a friend, like Gary Sevitsky was in the hallway outside the PDP-11 room at UW, or Brent Simmons on the 24 Hours project. And on other days Claude a crazy mutinous pirate, deleting my code, ignoring the guidelines, and building the result without permission (all the while unaware that he wasn't working on the actual code, heh). Today is one of the great days. The bug reports are crisp and complete. Picks up a task and gets right to work on it. And I haven't even switched to the new model, yet.

2018: "I can say what happened to Melo. He failed Linsanity. God came to his rescue. Gave him a player who was glad to be in the NBA, who would mold his game to make Melo the star that he was always capable of being. Melo didn't want anyone else in the spotlight. Goodbye Lin. Just imagine what the three guys in this picture could have done. The only thing in the way was Melo's hubris."

17:07

The Market Behind the Wall [I, Cringely]

Yesterday I told you what 2Brains is, and how it separates the saying from the knowing. Today, the part that ought to worry some very large companies: what all of it is worth if we’re right.

Wall Street is pricing the AI data-center buildout at something like $1.7 trillion by 2030. Almost all of that spend assumes one particular shape: vast halls of graphics chips answering questions by guessing, one likely word at a time. So ask the heretical question — how many of those “questions” are questions at all? How many are lookups? What’s our refund policy? What was Q3 revenue in the Ohio region? Is this patient allergic to penicillin? Those aren’t creative prompts. They’re retrievals, and an ordinary processor has answered retrievals flawlessly since before NVIDIA ever etched a graphics card. Our estimate is that roughly two-thirds of enterprise AI queries are lookups wearing a chatbot’s clothes.

Whoever owns the architecture that moves those two-thirds off the graphics chip doesn’t own a product. They own a tollbooth on a third of the traffic. On a $1.7-trillion road, that isn’t a company. It’s an asset class.

And the cost savings are the small half of the prize.

Here’s the big half — the half I somehow walked you past. There’s a market that can’t use any of this yet.

There’s a reason your bank’s AI will tell you its branch hours but not your account balance. A reason the hospital lets it summarize the cafeteria menu and not the medical chart. A reason no airline will put a language model near a cockpit and no law firm lets one file a brief without a terrified associate reading every line. It isn’t the cost. It’s that the thing lies — confidently, fluently, without warning, and without any tell. In a chatbot recommending a taco place, a hallucination is a shrug. In a domain where being wrong gets someone audited, sued, sick, or killed, a hallucination is a wall. And behind that wall sit the most valuable AI markets on Earth — banking, insurance, medicine, law, aviation, defense — frozen, spending fortunes on pilot projects that might never ship, because the last mile is always a liability lawyer saying no.

Salesforce built a test, called HERB, to measure precisely how often these systems invent an answer when they don’t actually know. OpenAI’s flagship does it 77 times out of 100. Salesforce’s own best effort was 32. Ours does it 3 — and those three aren’t lies, they’re refusals: the system saying I can’t verify that instead of guessing. Knock that number down and you don’t win a cheaper slice of the market that already exists. You unlock the market that’s been sitting behind a wall the entire time.

The reason 2Brains doesn’t lie and the reason it’s cheap are the same reason. It looks the fact up instead of guessing it — so it cannot fabricate, and the lookup runs on a processor that sips power instead of a chip that gulps it. Trust and thrift are not a trade-off you balance against each other. They fall out of a single design decision. You do not pay extra for the honest version. The honest version is the cheap version. That sentence is the whole company.

Which is why I would be nervous, were I sitting atop a five-and-a-half-trillion-dollar valuation built on a story its own executives call the tokenomics flywheel: AI gets cheaper, so people use more of it, so you sell more chips, forever. It’s a lovely flywheel. It also rests on two assumptions standing up indefinitely — that the lookups stay on the graphics chip, and that the hallucination tax, the wrongness, the wall, is simply the permanent cost of doing business, a thing you manage rather than cure. 2Brains is a bet that both assumptions fall in the same afternoon. NVIDIA’s own friendly analysts have begun writing the polite version of this worry: that the software moat protecting the company in training is thin in inference, and that custom silicon is already nibbling the edges. They’re circling the right pond. They just haven’t said the quiet part out loud — that the most expensive part of inference may not need the expensive chip at all.

AMD, for what it’s worth, gets to watch this from both sides of its own ledger, since it sells the graphics chip that loses and the processor that wins. If I ran their strategy desk, I’d have noticed that by now.

The rest tumbles downstream, and some of it is being poured in concrete. The power forecasts — nine to seventeen percent of all American electricity by 2030, the global doubling — are every one of them drawn on the assumption that each query needs a jet engine, so we are building to match. New gas plants are breaking ground. And we are bringing a reactor back to life at Three Mile Island. I knew that place when it was the most frightening address in America: in the summer of 1979, as a graduate student, I worked as an investigator for the President’s Commission on the Accident at Three Mile Island, and afterward wrote a book about it for Random House — Three Mile Island: The Hour-by-Hour Account of What Really Happened. The commission was chaired by John Kemeny, the president of Dartmouth and, in a symmetry history could not have scripted, one of the two men who invented BASIC — the language that first taught a generation of us to speak to a computer at all. The first electrons are due back on the grid in 2027, to run Microsoft’s data centers. Of all the ways I once imagined that story might end, restarted to power a machine that guesses was not among them.

In a single year, the big technology companies have signed contracts for something like ten gigawatts of new nuclear. Goldman Sachs reckons that feeding all the data-center demand the industry expects by 2030 would take eighty-five to ninety gigawatts of it — dozens of power plants, ordered to wait on machines that guess.

Now bend the assumption, and you bend the curve. Not all of those reactors, but some of them, turn out to be expensive answers to a question we never had to ask. A canceled nuclear plant is quite a side effect for a column about grammar.

And the curve, eventually, reaches your mailbox. My own Virginia electric bill rose about sixteen dollars a month on the first of January, a good deal of it to build grid for buildings full of machines that guess. The cheapest watt, it turns out, is the one you never had to burn — because the question never needed the chip.

So: suppose we’re right. The answer is a market measured in the hundreds of billions of dollars — half of it a market nobody can serve today, pried open by the very same stroke that makes it cheap. That is the prize.

What I haven’t told you yet is how a small company in Charlottesville intends to put a thing like that into the world — not as a product you buy, but as a standard that ends up inside everything, the way a firm in Cambridge once licensed a chip design that now hums inside nearly every phone alive without anyone noticing it was there. That’s tomorrow’s column. And it’s the one that decides whether honesty in machines is something the world will own outright, or merely rent.

Robert X. Cringely is a co-founder of 2Brains, Inc., in Charlottesville, Virginia. He has written this column since 1987

The post The Market Behind the Wall first appeared on I, Cringely.Digital Branding

Web Design Marketing

16:56

Colin Watson: Free software activity in May 2026 [Planet Debian]

My Debian contributions this month were all sponsored by Freexian.

You can also support my work directly via Liberapay or GitHub Sponsors.

OpenSSH

I backported various security fixes from 10.3 to trixie, bookworm, bullseye, buster, and stretch. For trixie, I also backported several IPQoS fixes to line up with upstream’s traffic management settings and drop a rather hacky Debian-specific patch; this needed a quick follow-up fix.

I upgraded trixie-backports to 10.3.

I fixed openssh uses pidof but does not depend on procps.

PuTTY

I upgraded from 0.83 to 0.84.

Python packaging

New upstream versions:

- bitstruct

- ormar

- pdm (fixing a build failure)

- pydantic

- pydantic-core

- pydantic-settings

- pyglet (fixing a build failure)

- python-asyncssh

- python-bitarray

- python-btrees

- python-build

- python-certifi

- python-charset-normalizer (fixing a build failure)

- python-fakeredis (contributed supporting fix upstream)

- python-holidays

- python-jsonschema-path

- python-memray (fixing a build failure and CVE-2026-32722)

- python-openapi-schema-validator

- python-pathable

- python-persistent

- python-pyftpdlib

- python-pytest-run-parallel

- sorl-thumbnail

- twisted

- zope.interface

- zope.proxy

Other build/test failures:

- beets

- buildbot (contributed upstream)

- dep-logic (contributed upstream)

- diskcache

- khard

- matplotlib

- mkdocs-rss-plugin

- ormar: compatibility with fastapi 0.125 and pydantic 2.13

- pgzero

- py7zr

- pydantic-extra-types (contributed upstream)

- pydata-sphinx-theme

- python-invocations (contributed upstream)

- python-localzone

- python-maturin

- python-nacl

- python-pampy

- python-treq (contributed upstream, including fixing some CI bitrot)

- python-txrequests (contributed upstream)

Other bugs:

- buildbot: (Build-)depends on deprecated module python3-pkg-resources (contributed upstream)

- pysodium: Depends on cruft package libsodium

- python-fakeredis: lua support not working, breaking django-redis cache locking

- python3.14: Drop libnsl-dev build-dependency

I updated python-treq upstream to stop vendoring multipart, now that the packaging issues with that have been sorted out.

Code reviews

- debmirror: User-Agent blocked by Ubuntu/Launchpad repositories (uploaded, and cherry-picked into trixie)

- pydantic: Fix CVE-2024-3772 in bookworm (merged and uploaded)

- pyodbc: Run SQLite tests (merged and uploaded)

- python-jsonschema-path: Transition to starlette 1.0 (merged and uploaded)

- python-maison:

FTBFS with the nocheck build profile

(followed up to fix the

nodocbuild profile as well) - python-openapi-core: Transition to starlette 1.0

- python-openapi-schema-validator: Transition to starlette 1.0 (merged and uploaded)

- python-openapi-spec-validator: Transition to starlette 1.0 (merged and uploaded)

- python-pathable: Transition to starlette 1.0 (merged and uploaded)

- python-rich-argparse: New upstream version 1.8.0 (merged and uploaded)

Other bits and pieces

I contributed a debian-policy patch to fix several links related to build profiles.

16:07

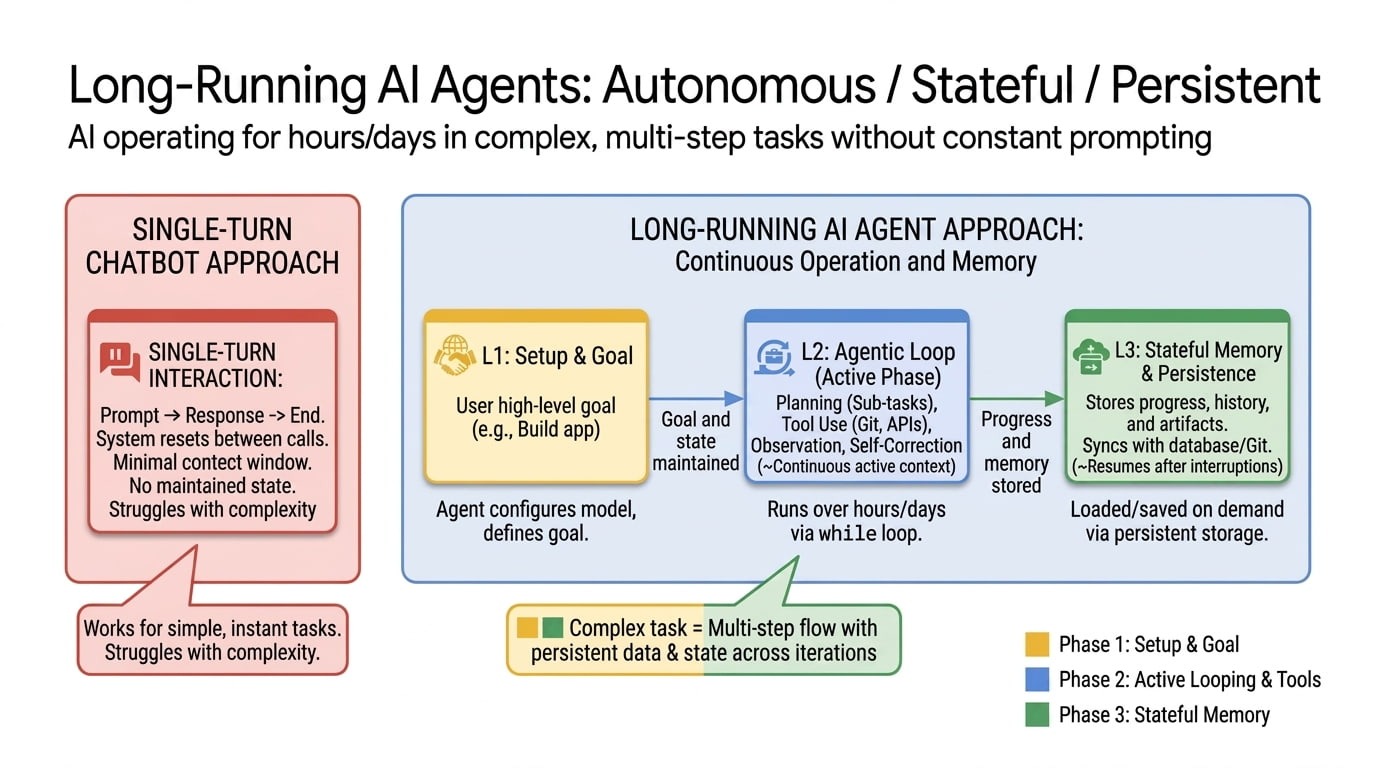

[$] AI agent runs amok in Fedora and elsewhere [LWN.net]

Agentic AI systems can be used to do a variety of things autonomously on behalf of a human user: open or manage bugs, generate code, submit pull-requests, and (apparently) even complain about rejection. In May, a Fedora developer discovered that an allegedly rogue agent had been pestering the project in a number of ways: reassigning bugs, fabricating unhelpful replies to bugs, and even persuading maintainers to merge questionable code into the Anaconda installer. It also submitted a number of pull requests (PRs), some accepted, to several upstream projects. The Fedora account associated with the agent has had its group privileges revoked and the messes have been mopped up, but the motive behind the agent's actions is still a mystery.

Today in “Words Mean Things” [Whatever]

An interesting jurisprudential development someplace not in the US:

A German court has ruled that Google is directly liable for what its AI search overviews say. Previous case law shielding search engine operators from liability doesn’t apply to AI overviews.

The Regional Court of Munich hit Google with a temporary injunction barring the company from spreading false claims about two Munich-based publishers through its AI-generated search overviews (case no. 26 O 869/26). The court classified Google as a direct infringer because the “AI overview” is its own content, not just a list of search results.

The crux of the issue is whether the “AI Overview” Google now provides — and which is often erroneous because LLMs can’t read or exercise judgement, they can only spit out statistically likely words — counts as a presentation of information provided elsewhere, as a normal search query might be, or is a new creation with its own set of liabilities. The court, for various reasons, decided it is the latter (go ahead and click through to see a fuller explanation of the court’s decision).

I’m not well enough versed with the German legal system to determine whether this sort of ruling is going to succeed on appeal (and it is absolutely going to be appealed) but as a matter of personal understanding, this ruling seems pretty legit to me. The “AI Overview” isn’t a search listing — Google has gone through the trouble of passing it through its LLM and letting the thing make a document about it, and these documents, both by tone and by their position at the top of a Google search page, sound authoritative and present as factual. These documents may not be copyrightable, but that doesn’t mean Google didn’t create them and are thus responsible for them.

This isn’t the first time Google has found itself in legal hot water over its “AI Overview” function — a musician in Canada is currently suing the company after its overview identified him as a sex offender and he lost work because of it. But as far as I know this is the first court ruling that says Google is liable for what its “overviews” say. I suspect it will be very closely scrutinized by others in other places who have, ahem, run into similar issues with the overview.

I’m curious whether such a legal ruling would be possible in the United States, which has famously liberal (in the classical sense) free speech laws and has an extremely high bar for defamation, especially for public individuals, under the NYT v Sullivan Supreme Court ruling. Perhaps in the US the best avenue to pursue this would not be on the grounds of free speech but of product liability: A product that fails a significant amount of the time but is still presented to consumers as reliable feels like a class action suit waiting to happen.

No matter what, however, this is a big moment for “AI” and the information that it presents. Whether this spurs tech companies to make better products, or just spend more money on legal, will be the open question. One is, admittedly, easier than the other.

— JS

German court rules Google is liable for whatever Google’s “AI” generates [OSnews]

It’s just a ruling from a lower court, but it sets the stage for how European courts are going to deal with the question of who is liable for whatever slop “AI” generates.

The Regional Court of Munich hit Google with a temporary injunction barring the company from spreading false claims about two Munich-based publishers through its AI-generated search overviews (case no. 26 O 869/26). The court classified Google as a direct infringer because the “AI overview” is its own content, not just a list of search results.

Google’s AI overviews had falsely tied two publishing companies to scams, subscription traps, and shady business practices for certain search queries. According to the court, the AI mixed up information about other, genuinely sketchy companies with the plaintiffs and drew connections that didn’t appear in any of the linked sources. The publishers sent Google a cease-and-desist letter, but Google didn’t respond appropriately.

↫ Matthias Bastian at The Decoder

Google tried to argue it doesn’t carry any responsibility or liability for whatever slop its “AI” generate, but the German court does not agree. According to the court, “AI” overviews are not the same as regular search results, because they rewrite findings and just make shit up, thereby making claims that are nowhere to be found in any search results (or in reality in general). Furthermore, the court states that Google develops the “AI”, it runs it, it offers it to users, and Google alone controls its output, and as such, Google is liable for whatever their “AI” produces.

Google also tried to argue that users know not to trust anything an “AI” produces, which is hilarious considering how hard Google is pushing these tools, but the courts state that the ability of users to do further research does not absolve Google of liability. In addition, the court made it very clear that free speech protections absolutely do not apply, because the “AI” expressions are coming from an algorithm, not a person, and are above all an expression of Google’s business activities”.

In other words, if an “AI” tool generates false accusations and misleading statements, the creator of said “AI” is liable. With this ruling in hand, countless other people have a stronger case to make whenever Google or any other company tries to absolve itself from liability from slop just because a pachinko machine generated it.

Excellent news, and the only fair outcome.

Eagle Computer: the rise and fall of an early PC clone [OSnews]

When it comes to 80s computer brands, few flew as high as Eagle Computer flew in 1983. The aptly named company was selling 12,000 computers a month and had been doubling sales every quarter under the leadership of a talented CEO. Then Eagle lost its CEO, Dennis Barnhart, in a crashed Ferrari on the day of its IPO, June 8, 1983. In this blog post, we’ll explore the reasons Eagle Computer fell, because there was more to it than just the tragic story involving its CEO.

↫ Dave Farquhar

Just one of the many early PC companies that died off, even if Eagle died off before many of the other big players. It must’ve been such a vibrant and fascinating time to be into PCs and computers in general at that time, with so many companies and players to choose from.

Shame about the 308 GTS.

15:21

Buildroot 2026.05 released [LWN.net]

Version 2026.05 of the Buildroot tool has been released. Buildroot simplifies and automates the process of building embedded Linux systems using cross-compilation. Notable changes in this release include support for Arm Neoverse cores, addition of XFS rootfs generation, as well as many package updates and bug fixes. See the CHANGES file for the full list.

14:56

If you run a feed reader or other form of news consuming software, you will encounter RSS 2.0 feeds that support rssCloud. This example Node app shows you how to hook into the network to get instant updates. No polling. As fast as a twitter-like system

Jeremy

Lin and Carmelo Anthony got together yesterday and had a

private conversation. A lot of people, including myself, were drawn

back into the NBA because of Jeremy Lin. I was living in the city

at the time, you could feel it everywhere, esp downtown Manhattan

and Flushing. It was wonderful in so many ways. A hero could

emerge from anywhere, he might not look like an NBA player, but

there he is doing stuff he shouldn't be able to do. Undrafted, went

to Harvard. When he's in motion he's a thing of beauty. It worked

because Melo was out with an injury, as soon as he came back the ,

the ball was always in Melo's hands. So Melo dribbles and shoots,

that was the extent of their offense, and there was no room for

Linsanity and that was the end of that. It's what made us laugh

when Melo said later his goal was a championship. If that's what he

wanted, Lin was a gift from heaven. Lin was pushed out, and had a

non-spectacular career from that point. There was magic there. It

wasn't just Lin, it was the world -- we were ready for a Cinderella

story in any context -- but in our culture they're always

manufactured, this one was real. This crushed the hearts of Knicks

fans, and people who believe in heroes popping up from nowhere. We

don't talk about it. But we were cheated there, too. We had

a right to see where that would go. And narcissists don't win NBA

titles, that's what we learned. It's good that someone thought to

get these guys together. Maybe Melo has grown, and sees that he

didn't play for the team there, or fate. We all deserved to find

out what was next.

Jeremy

Lin and Carmelo Anthony got together yesterday and had a

private conversation. A lot of people, including myself, were drawn

back into the NBA because of Jeremy Lin. I was living in the city

at the time, you could feel it everywhere, esp downtown Manhattan

and Flushing. It was wonderful in so many ways. A hero could

emerge from anywhere, he might not look like an NBA player, but

there he is doing stuff he shouldn't be able to do. Undrafted, went

to Harvard. When he's in motion he's a thing of beauty. It worked

because Melo was out with an injury, as soon as he came back the ,

the ball was always in Melo's hands. So Melo dribbles and shoots,

that was the extent of their offense, and there was no room for

Linsanity and that was the end of that. It's what made us laugh

when Melo said later his goal was a championship. If that's what he

wanted, Lin was a gift from heaven. Lin was pushed out, and had a

non-spectacular career from that point. There was magic there. It

wasn't just Lin, it was the world -- we were ready for a Cinderella

story in any context -- but in our culture they're always

manufactured, this one was real. This crushed the hearts of Knicks

fans, and people who believe in heroes popping up from nowhere. We

don't talk about it. But we were cheated there, too. We had

a right to see where that would go. And narcissists don't win NBA

titles, that's what we learned. It's good that someone thought to

get these guys together. Maybe Melo has grown, and sees that he

didn't play for the team there, or fate. We all deserved to find

out what was next.

14:35

Security updates for Wednesday [LWN.net]

Security updates have been issued by AlmaLinux (poppler), Debian (dnsmasq, mistral, okular, openssl, poppler, and strongswan), Fedora (exim, firefox, pcs, putty, and xorg-x11-server), Mageia (freeciv, golang-x-net, jq, libssh, libxmp, libxpm, minetest, ruby-net-ssh, tor, and wireshark), SUSE (389-ds, ack, agama-web-ui, amazon-ssm-agent, avahi, dpkg, elemental-register, elemental-system-agent, elemental-toolkit, ggml-devel-9500, go1.25, go1.26, kernel, kubernetes1.23, kubernetes1.24, kubernetes1.26, libsoup, mariadb, netty, netty-tcnative, NetworkManager, nginx, perl-CryptX, perl-XML-LibXML, podofo, polkit, python-Django, python-requests, samba, strongswan, vim, and xen), and Ubuntu (cyborg, gdk-pixbuf, golang-golang-x-net-dev, nginx, node-lodash, openssl, openssl, openssl1.0, qemu, tomcat9, tomcat10, and vim).

14:14

It might be time for a new default search engine. Sometimes I'm looking for something to link to. Google makes that always more difficult. We still have a web. Google at one point made the web a lot more useful. Now it's pushing it further and further down.

13:00

Issue 46 – Greta’s Wedding – 07 [Comics Archive - Spinnyverse]

The post Issue 46 – Greta’s Wedding – 07 appeared first on Spinnyverse.

12:42

The PM’s Playbook for Shipping AI Features That Actually Work in Production [Radar]

The demo to production Death Valley

If you’ve worked on an AI feature, you know the feeling. You start building something that you are excited about, set launch timelines. The model spits out a perfect response, the prototype works magically, and everybody in the room is mentally calculating how big this product will be when we launch. I’ve been in that room a lot many times and it’s fun.

Then you try to test before you ship.

Latency spikes to 10 seconds on mobile. The model starts hallucinating on edge cases that happen to represent 15% of actual user queries. Your A/B test shows no statistically significant engagement lift because the variance in AI outputs makes traditional hypothesis testing basically meaningless. The safety team flags 340 failure cases in the first week, and you’re now debugging nondeterministic cases that fail in creative, novel ways every single day.

Most often than not, it’s not a model problem but an engineering discipline problem. Shipping an AI product is very different from traditional software. I’ve figured this out the hard way. This playbook shares my learnings.

Latency budgets

Every AI feature comes with a latency tax. Large language model inference takes time. We’re talking 500 milliseconds to 5 or even 50 seconds depending on model size, input length, and infrastructure setup. For consumer products where people expect sub-200-millisecond interactions, this is a hard constraint you have to design around.

The mistake I see most often is teams measuring only p50 latency. A feature with 800 milliseconds p50 sounds fine until you discover the p90 is 15 seconds. That means 10 in every 100 users sit there waiting for 15+ seconds. At scale, that’s thousands of terrible experiences per day.

The way I think about it is you define your latency budget by interaction type, not globally: Synchronous interactions, where the user is staring at a spinner, need to resolve under 1 second. Progressive interactions, where output streams token by token, need first token in under 500 milliseconds and full response under 5 seconds. Asynchronous interactions, where the user keeps doing other stuff, can take up to 20 seconds with a progress indicator.

You also need to measure cold starts separately. The first request after a model loads into memory can be 10 times slower than subsequent requests, and if your traffic is bursty, cold starts will disproportionately punish your most engaged users arriving during peak hours.

Besides, you also need to budget for the full pipeline, not just inference. A typical AI feature pipeline including input preprocessing (tokenization, context assembly, and prompt construction), model inference, output postprocessing (parsing, formatting, safety filtering, etc.), and a full response delivery adds up. Optimizing inference while ignoring the rest is like tuning your engine while driving on flat tires.

Lastly, use streaming aggressively for generative features. Pushing tokens to the user as they’re generated instead of waiting for the full response changes how users perceive latency. A four-second response that starts appearing at 300 milliseconds feels dramatically faster than one that pops in all at once. Perception is reality when it comes to user experience.

Designing fallbacks

Traditional software fails in boring, predictable ways. AI features fail in novel, unpredictable, and occasionally creative ways. I once saw a model respond to a product recommendation query with a poem about loneliness. Your fallback strategy needs to be considerably more sophisticated than a try/catch block.

I think about fallbacks as a hierarchy. First, model fallback: When your primary model fails, drop to a simpler, faster, and more reliable model. Most failure cases get handled without the user ever knowing. Second, cache fallback: For queries similar to stuff you’ve seen before, serve a cached response. Third, template fallback: When generation fails completely, fall back to prewritten templates. Degraded beats dead every time. Fourth, graceful omission: Sometimes the best fallback is to simply not show the AI feature at all rather than showing a broken version.

The design principle underneath all of this is that users should never encounter an unhandled AI failure. Every failure mode maps to a specific level, and transitions between levels should be invisible whenever you can manage it.

Quality measurement

Quality in traditional software is binary. The button works or it doesn’t. AI feature quality is continuous and subjective, and it changes depending on context. I’ve landed on a four-layer quality pyramid.

The foundation is safety, and it’s nonnegotiable. Does the output contain harmful content, PII, or made-up facts? This layer is binary, and you measure it with automated classifiers running against 100% of outputs.

The second layer is factual correctness, which is domain specific. Is the output actually right? For a coding assistant that means generated code compiles and passes tests. For a writing tool it means grammatical, stylistically appropriate output. You measure this with domain specific evaluation suites.

The third layer is usefulness, and it’s user centered. Did the person actually benefit? Track acceptance rate, edit distance, time to task completion, and repeat usage. This is where traditional product metrics meet AI specific ones.

The fourth layer is delight, which is experimental. Does the output feel good? Hardest to measure but often most important for adoption. Sometimes the numbers say the feature works but users’ guts say it doesn’t. This layer catches that gap.

A/B testing AI features

A/B testing AI features is fundamentally harder than traditional features because AI outputs are nondeterministic. The same user doing the same thing twice might get different outputs, introducing variance that traditional frameworks weren’t built to handle.

The core challenge is that intratreatment variance inflates the sample size you need for statistical significance, often by three to five times. If you’re running your AI experiment with normal sample size assumptions, you’re probably looking at noise and calling it signal.

Then there’s the metric selection problem. A chatbot generating entertaining but factually wrong responses might show amazing engagement numbers while actively misleading users. You have to measure engagement and quality together. “Engaged interactions where quality score exceeds threshold” is more meaningful than raw engagement alone.

The temporal problem matters too. AI feature value changes over time as users learn how to work with it. Short experiments will underestimate long-term value if there’s a learning curve, or overestimate it if there’s a novelty bump.

My practical guidance: budget two to three times more time and traffic for AI experiments than traditional ones. Lean on Bayesian methods as they handle high variance better. And always pair quantitative tests with qualitative research. Ten user interviews will surface failure modes that no amount of statistical analysis will catch.

Model drift monitoring

Model drift is the slow, invisible rot of AI output quality over time, and there are multiple culprits.

Data drift happens because the world changes and user behavior evolves. A model trained on 2024 data performs worse on 2026 queries referencing new concepts, slang, and cultural moments.

Provider drift happens because third-party APIs change without your consent. OpenAI acknowledged that GPT-4’s behavior shifted measurably between March and June 2023, and Stanford researchers documented significant performance swings. The fix: Pin your model versions so updates happen on your schedule, after your testing.

Evaluation drift is the subtlest form. Even your quality metrics can become inadequate and the evaluation criteria that made sense at launch might become inadequate as usage patterns shift and user expectations change. Quarterly reviews of your evaluation suites are essential.

At minimum you need daily automated quality evaluations on 1% to 5% of production traffic, weekly analysis of input distribution characteristics, and monthly human evaluation of 100 to 500 examples. Shipping an AI feature without drift monitoring is like deploying a service without alerting. You won’t know it’s broken until your users tell you, and by then they’re angry.

Evaluation frameworks

How do you know if your AI feature is good enough? You need two fundamentally different approaches, and you genuinely need both.

Automated evaluation gives you speed. Build a golden dataset of 500 to 2,000 labeled examples, train a classifier or use a capable model as judge, and validate against human judgment quarterly targeting 85% agreement. Automated evals chew through thousands of examples per hour, making them essential for velocity. The pitfall: They miss novel failure modes not in the training data.

Human evaluation catches what automation misses. Structure it with five to seven evaluators mixing domain experts and representative users. Use a consistent rubric covering accuracy, helpfulness, tone, completeness, and safety. Run weekly during development, monthly in production. The trade-offs: expensive at $15 to $30 per example, slow with 24 to 72 hour turnaround, and subject to human biases. Manage by rotating evaluators and capping sessions at two hours.

The model as judge approach is an increasingly viable middle ground. Judging quality is often easier than generating it, which means a model can reliably evaluate outputs even for tasks where it couldn’t produce them itself. Use it for high-volume evaluation but always validate against human judgment.

Graceful degradation and prompt engineering

Graceful degradation means when capabilities decrease, the experience gets worse smoothly instead of falling off a cliff. Design for capability levels, not binary states. Define four to five levels with specific behaviors at each. For example, for an AI writing assistant: Level 5 is full capability with real-time suggestions, tone adjustment, and structure recommendations. Level 4 is delayed suggestions appearing after a two- to three-second pause because latency is up. Level 3 is basic suggestions only like grammar and spelling with no style feedback. Each level is a deliberate design decision, not an accident.

Make degradation invisible when possible. Users shouldn’t see a “broken” experience. They see a less detailed one. That’s a huge difference psychologically. However, when the degradation is significant enough that users will notice, proactive communication like “AI suggestions are temporarily limited” builds trust infinitely more than silently pushing poor-quality outputs.

Prompt engineering in production is software engineering. In production, prompts are code, and they need version control, testing, monitoring, and maintenance. Version controls every prompt. Parameterize prompts, don’t hardcode context. Production prompts should be templates with clearly defined injection points for user context, system state, and dynamic instructions. This makes them testable because you can inject known inputs and verify outputs, and it makes them maintainable because changing how you handle context shouldn’t require rewriting the entire prompt from scratch.

Test prompts against regression suites. Maintain 200 to 500 test cases covering the full distribution of expected inputs, including edge cases and adversarial inputs. Run the suite against every prompt change before deployment.

Monitor prompt performance in production. Track output quality metrics like acceptance rate, user edits, and regeneration requests, segmented by prompt version. When you deploy a new version, compare its production metrics against the previous one for at least 72 hours before calling it stable. This is basically canary deployment for prompts.

Ship it right

These systems aren’t optional add ons you can bolt on after launch. Every feature I’ve seen fail was built first with plans to “add production hardening later.” Later never comes.

AI features are probabilistic and nondeterministic, and they change over time without anyone touching them. Build these systems, staff them properly, and treat them with the same seriousness you’d give your core infrastructure. The gap between demo and production is wide, but it’s absolutely crossable if you build the right bridge.

Note: The research work pertaining to this article was done in a personal capacity. Views are of my own and do not reflect my employer’s views in any way.

12:21

CodeSOD: Delicious Fudge [The Daily WTF]

Stella (previously)

sends us a much elided snippet. The original code is several

thousand lines contained in a single try block. But

the WTF is pretty clear without seeing all of that:

try:

# the whole business logic without any exception handling

except:

print("Fudge")

They didn't really say fudge of course, but we mostly try to keep profanity off our main page. Mostly. In any case, when your operation fails someplace in the middle and you have no idea where, why, or how: "Oh, fudge!" is the appropriate expression.

12:14

NSO Group Hacking WhatsApp Despite Court Order [Schneier on Security]

WhatsApp has caught the NSO Group phishing its users, in violation of a court order.

10:35

Video games, movies and books [Seth's Blog]

What’s the structure of your project? Here are three paradigms to consider:

Video game development is expensive and risky because you’re on two frontiers at once. The tech frontier, trying to do something with hardware that hasn’t been done before, and the game mechanics frontier, perfecting and polishing new forms of interaction that last. So Myst and Tetris and Doom… classics we talk about decades later. A teenager could build a knockoff of any of these in a few weeks now, but back then, they represented risky leaps.

Movies use a technology that’s over a hundred years old, with incremental improvements added all the time. But being the first with the new tech doesn’t win many prizes. Instead, successful movies are a combination of one creator’s vision and the coordinated work of hundreds or thousands of professionals using proven tools and techniques.

And books, five hundred years into the genre, still remain the work of one voice. The partnership with a largely unseen editor and publisher matters, but sooner or later, the author puts the words on paper.

[There are analogies here that go far beyond the strict adherence to the three final products of course. Slack is a videogame, developing real estate, making a record or performing surgery is a movie, and the work of a freelancer is closest to writing a book…]

I’ve done all three, and each is thrilling in its own way. As the available tech advances, each type of project is more accessible than ever. But each still comes with its own rules, risks and upsides.

We get to choose.

08:56

One Tart Per Million [Penny Arcade]

New Comic: One Tart Per Million

06:28

Urgent: Boycott Home Depot [Richard Stallman's Political Notes]

US citizens: call on Home Depot and Lowe's to stop spying on customers.

Urgent: reject Bill Pulte for Director [Richard Stallman's Political Notes]

US citizens: call on your senators to reject Bill Pulte for Director of National Intelligence.

See the instructions for how to sign this letter campaign without running any nonfree JavaScript code — not trivial, but not hard.

Urgent: USPS vote by mail rule [Richard Stallman's Political Notes]

US citizens: call on USPS to withdraw its proposed limits on who can vote by mail.

See the instructions for how to sign this letter campaign without running any nonfree JavaScript code — not trivial, but not hard.

Urgent: tell congress no child should be separated [Richard Stallman's Political Notes]

US citizens: call on Congress to require that deportation thugs not separate a child from per family.

Sea level rise [Richard Stallman's Political Notes]

Summarizing the state of protecting the oceans, both surface and bottom, from damage by human society.

Losing track of a prisoner [Richard Stallman's Political Notes]

Prisons in the US sometimes lose track of a prisoner, making it impossible for relatives and lawyers to contact per. The result is terrible. This article focuses on Malik Muhammad; Oregon sent per into a South Carolina prison and per family could not tell where perse was.

I found this article gratuitously hard to understand because of its unannounced and unexplained use of plural pronouns to refer to Malik Muhammad. For each plural pronoun there was some group of people that it could have referred to. I had almost reached the end before I realized I had been misunderstanding over and over.

This sort of problem is part of the reason why I completely reject use of plural pronouns for an individual. For the situations where I don't know someone's gender, and for people who assert non binary gender, I use gender less singular pronouns.

We should all switch to them, to make our speech and writing easier to understand.

Home Office-sponsored report [Richard Stallman's Political Notes]

The author of a UK government report on drug trade in the UK run by China reports on repeated attempts to corrupt him.

Todd Blanche’s nomination [Richard Stallman's Political Notes]

The bully's nominee for attorney general has been one of his henchmen since 2023.

He has already attempted many injustices for his master.

Palestinian detainees in Israeli jails [Richard Stallman's Political Notes]

Palestinians testify about being beaten and raped in Israeli prisons.

No matter what the reason for putting someone in prison, even if it was a sentence resulting from a fair trial, torturing per is never justified.

05:35

Girl Genius for Wednesday, June 10, 2026 [Girl Genius]

The Girl Genius comic for Wednesday, June 10, 2026 has been posted.

02:07

Vincent Bernat: Blogging with an LLM assistant [Planet Debian]

AI slop is invading the web. A recent story about disallowing LLM-generated submissions on Lobsters triggered a lot of debate. My personal worst offenders are LinkedIn articles with AI-generated images and uninspired articles filled with emojis from people trying to masquerade as experts on a subject they don’t care enough to write themselves. While I am unhappy about this situation, I rely on LLMs for grammar, copyediting, and translation. I don’t see this as a contradiction.

I am a native French speaker, but I blog in both English and French. When I started writing this blog in 2011, I was composing in French and translating to English, but I found it was better to work in the reverse order to avoid unnatural and non-idiomatic constructions. One of my goals is to write “good” English but I never felt it was my strong point.1 For example, verb tenses are often an issue, even if I mostly stick with the present tense. I learn the rules and forget them right away. I also don’t feel like hiring an editor for something I see as an hobby.

As an example, I have kept the history of the successive iterations when writing “Scaling Akvorado BMP RIB with sharding”:

- the first draft, authored with the help of a thesaurus,2

- the edited copy revised by the copyediting skill,

- the translation to French generated with the translation skill, and

- the human proofread of the French translation, with minor edits to the English version.

I know that LLMs may alter the author’s voice when editing, but the corrections in the second step are minor. The prompt asks to “apply light stylistic edits,” with some guidance around avoiding passive voice, long sentences, bland verbs, and filler words. It also defines the target audience: technical with a B2 level in English.

In the following excerpt, I used “long time” instead of “long-standing.” The former is missing an hyphen and applies to people—a long-time friend, while the later relates to a situation—a long-standing agreement. I had a hard time understanding the reason of the second change: the LLM prefers a defining relative clause to provide the definition of “RIB sharding.”

As the Internet routing table contains more than 1 million routes, Akvorado needs to scale to tens of millions of routes. This has been a

long timelong-standing challenge, but I expect this issue is now fixed by using RIB sharding, a methodto splitthat splits the routing database into several parts to enable concurrent updates.

In the next modification, the LLM puts “device” instead of “equipment.” This is correct as “equipment” is an uncountable noun. I know that, but I still fall into this trap.

When Akvorado does not find a route from a specific device, it falls back to a route sent by another

equipmentdevice.

I ask the LLM to use “descriptive verbs” and it complies by replacing a multi-word predicate with a lexically rich verb:

The benchmarks demonstrate it

has better performance thanoutperforms otherpackages, bothpackages for lookups, insertions, and memory usage.

It also fixes grammar errors. In the next excerpt, a “list of routes” is a singular expression. Moreover, “stored” is a state and I should not use “into” as it expresses a change.

The list of routes for each prefix

areis not stored directlyintoin the prefix tree.

As a last example, consider the following snippet. The “require” verb accepts a noun or an object followed by a to-infinitive. I can’t use it with just a to-infinitive.

An alternative would be to have one prefix tree for each peer but it would require

to configureconfiguring all routers to export their routes.

As someone who didn’t grow up speaking English, I struggle with these grammar rules despite reading a lot of English material.3 French is more complex to get started but more systematic. English is full of irregularities.

On each page, I disclose in the footer whether an AI modified the content. There are three levels:

- 🧠: no AI or almost no AI (e.g., grammar corrections)

- ✨: enhanced (e.g., copyediting)

- 🤖: generated (e.g., translated from another language, even if human-edited)

Hover or tap the icon to reveal the AI’s name and its role in the document.

The graph below shows which tool altered each post, year by year. Recently, I applied the grammar skill to past articles. Since 2018, French articles have been translated with the help of DeepL first, then of an LLM. Since 2024, English articles are copyedited.

If you are strongly against any usage of LLMs specifically for writing, I hope you accept my more nuanced position on the usage of these tools as a trade-off to provide clearer and more engaging articles. Years of literature on improving English told us it is important to choose the right word to keep the reader engaged.

[…] Good writing consists of mastering the fundamentals (vocabulary, grammar, the elements of style) and then filling the third level of your toolbox with the right instruments.

― Stephen King, On Writing

Note

Unlike other recent articles, I did not use an LLM to edit this post: an unnamed person kindly accepted to proofread it. I translated it to French without using an LLM either.

-

I recently read cover to cover “Writing for Developers” and I found it stimulating. Michael Lynch is currently writing “Refactoring English” on the same topic and I have subscribed to the early access. ↩

-

I am quite happy with the writing tools provided by Kagi. Both the translate tool and the dictionary are a valuable help to find different wordings. I also lean on Kagi’s research assistant when researching a topic. ↩

-

When I was ten, I played Monkey Island 2 in English without having taken any classes. I used a dictionary to translate word by word and I found the irregular verbs confusing—and not in the dictionary. ↩

00:07

Tell Congress: Just Say No to NO FAKES [EFF Action Center]

The NO FAKES Act is designed to protect against companies or individuals that use an unauthorized digital likeness of someone by wrapping up those digital replicas in a federal intellectual property right and giving that individual—or their heirs—the right to sue. In doing so, the NO FAKES Act mimics some of the most broken parts of our copyright system and makes them worse.

For example, the bill includes a safe harbor scheme modeled on the DMCA notice and takedown process. But the DMCA process has been abused for decades to target lawful speech, and there’s every reason to suppose NO FAKES will lead to the same result. In order to stay within safe harbors, when a platform receives a takedown notice for an alleged digital replica, it must remove “all instances” of that unlawful content. That requirement will inevitably lead to content “filters” that will censor lawful speech.

A property right also means years of legal uncertainty for every website and app that hosts user-uploaded material, as courts figure out when to hold those sites responsible for “digital replicas.” Today’s giant online platforms can absorb that risk and cost easily, but alternatives will struggle to comply, further entrenching today’s big tech monopolists.

NO FAKES goes even further than copyright in encouraging abuse.

While copyright already lasts absurdly long—up to 70 years

after the author’s death—the new right created by NO

FAKES can potentially last forever, creating liability risks and

legal costs for documentarians and historians.

NO FAKES is also a major government overreach. A person’s

name and likeness are facts, and the Constitution forbids Congress

from granting a property right in those facts.

Deceptive, AI-generated replicas can cause real harm, and performers have a right to fair compensation for the use of their likenesses, should they choose to allow that use. But the costs of this bill far outweigh the benefits.

Tuesday, 09 June

23:49

Tell Congress: Just Say No to NO FAKES [Deeplinks]

The Senate Judiciary Committee is set to consider and vote on the Nurture Originals, Foster Art, and Keep Entertainment Safe Act (NO FAKES). Instead of targeting the real privacy harms posed by AI-generated replicas, this law would create another layer of internet censorship on top of the already existing legal and voluntary takedown systems. Congress should reject NO FAKES.

Tell Congress to Say No to NO FAKES

As currently written, NO FAKES proposes to tackle the problems of misleading AI-generated replicas by creating a broad property right in someone's look, voice, and general style. However, there are all kinds of First Amendment-protected expression that would be swept under the NO FAKES regime—think about parody, news, criticism.

NO FAKES also does a laughable job of protecting artists from use of their image in misleading ways. It doesn’t create a privacy right, but rather a property right that can easily be signed away—as major studios and record labels are almost certain to require in their contracts with artists. As a result, NO FAKES actually creates a new avenue for the exploitation of artists by companies instead of protection from misleading replicas.

The bill also makes it trivially easy for protected speech to be censored. It is a supercharged version of the already flawed copyright takedown regime. It would essentially require platforms to institute filters that don't just look for exact matches of copyrighted material, as current filters do, but anything that might be a digital replica. Even though the latest version of this bill adds some forms of redress for bad faith takedowns, those provisions lack the teeth required to deter a malicious actor.

NO FAKES targets speech, tools, and innovation instead of focusing on the real concern posed by these replicas: privacy. This bill was a bad idea when it was introduced, and got even worse when it was amended last year. Tell Congress to just say no to NO FAKES.

23:00

The Microsoft Company Party where everybody played name tag swap [The Old New Thing]

I learned from a long-retired Microsoft employee about a Company Party that took place around 1984 or so. The company was small enough that a single party could fit the entire company, but not so small that everybody knew everybody else, so each guest was issued a name tag.

During the evening, an unofficial game arose in which people started exchanging their name tags with others whom they met. It also served as a fun little conversation starter: If you swapped name tags with someone and ended up with the tag for somebody you didn’t know, it wasn’t hard to find a mutual acquaintance who could track them down and introduce you.

At one point, the employee who was retelling the story was in a group talking with Bill Gates, who was among the few attendees still wearing their original name tags. Bill spotted that one of the other people in the group had a “Gary Kildall” name tag. I don’t know whether Gary Kildall was actually invited to the party, or that somebody just created a Gary Kildall name tag as a joke. But Bill saw the “Gary Kildall” name tag and eagerly swapped his name tag for it.

The post The Microsoft Company Party where everybody played name tag swap appeared first on The Old New Thing.

22:14

Introducing brand new OSNews merch with the new logo! [OSnews]

A new logo means new merch! I’m launching brand new merch today, all featuring the brand new OSNews logo. We’ve got the classic T-shirt with the new OSNews logo, in sandy white and terrain grey. They’re made from sustainably-grown and processed cotton, come in a variety of sizes, and ship worldwide.

The crowdpleaser is also making its triumphant return: the OSNews coffee mug, now also with the new logo and a green-on-white two-tone design. It holds coffee and tea, of course, but feel free to use it for whatever you want. Grow a plant in it!

A newcomer is the OSNews Mousepad – a basic, no-nonsense, no-frills mousepad that does exactly what it’s supposed to do, in a classic square(ish) formfactor. It makes for a great companion to any (retro) setup, but feels particularly at home with BeOS and OS/2.

One merch item remains from our previous collection: the ever-popular Gemini shirt and longsleeve, with a retro ASCII-art OSNews logo in bright green on deep black. It’s like staring at a real classic CRT. On your chest. Don’t sit too close.

As always, every price is set so that for every item sold, roughly €8 goes to OSNews. I will add the proceeds to our fundraiser tracker, so this is yet another way to support us, together with Ko-Fi donations, SEPA direct bank transfers1, and Patreon.

- Name: Thom Holwerda – IBAN: SE08 8000 0820 1684 4657 8414 – BIC: SWEDSESS ↩︎

20:49

Some Thoughts On “Masters of the Universe” [Whatever]

There are a lot of bad movies in the world.

There are, of course, good movies and bad movies, but there’s

also a special third category of “good bad” movies. I

had a feeling going into the theater to see Masters of the

Universe which category it would fall into.

There are a lot of bad movies in the world.

There are, of course, good movies and bad movies, but there’s

also a special third category of “good bad” movies. I

had a feeling going into the theater to see Masters of the

Universe which category it would fall into.

Despite never having actually watched any He-Man content before, I was surprisingly really excited for this movie. Not because I thought it would be absolutely amazing, but because I thought it would be fun. And boy oh boy, I was right.

Masters of the Universe is wildly entertaining, extremely colorful, and certainly not the worst way I’ve spent two hours and seven bucks (matinee shows rock). I know it’s not very good, but I still think you should go see it on the big screen if you can. Besides the film being an excuse to eat popcorn and have an Icee, what makes it worth watching?

(SPOILER WARNING MOVING FORWARD!)

For starters, I love the fact that Adam holds firm on the existence of Eternia, and never stops believing in the world he comes from. I love that he tells everyone his truth, even if it costs him his social life and dating prospects. He doesn’t hide his truth even if it makes him sound crazy, and I really like that he’s not willing to deny Eternia’s existence just to fit in or seem more normal. He knows it’s real, and that’s all that matters. He never gives up hope on finding the sword and returning to a home he knows exists and is waiting for him to come back. (I am glad he at least got to prove everything to his roommate, who definitely thought he was delusional, but finding good roommates is hard.)

I love that Teela just wants to be friends, and that’s actually completely respected and not questioned at all! He-Man is a real man and knows there is no such thing as the friendzone and that he is lucky to have Teela as his good friend and comrade in battle. And that’s enough. He took the rejection of his kiss well and moved on from it quickly instead of being a huge baby about it. And they didn’t end up together in the end! They really are just friends, and I love that for them. Not that I don’t love a good “childhood friends reunited” love story, but He-Man should focus on saving the universe or whatever, not smooching.

I love Skeletor’s goofy ass evil witch. I mean her name is literally Evil-Lyn. How excellently corny. It just one of the many ways this movie doesn’t take itself too seriously. They know He-Man is a silly concept and heavily memed franchise, and they lean into the silliness in a delightful way. Alison Brie was amazing to watch as the dark sorceress, her facial expressions really made the performance.

Speaking of Skeletor, oh my lord did I love Skeletor. I love a villain that is bad for badness sake, a villain that relishes being evil and has no tragic backstory to inspire such dastardly deeds, he just is the villain. And he loves it. Skeletor’s incredibly homoerotic comments about He-Man might have genuinely been the hardest I laughed at the movie. Yes, Skeletor, tell me more about He-Man’s giant sword and glorious thighs. I did think Skeletor’s body looked kind of goofy, like he was too shredded and looked too much like an anatomical model in a science textbook, and I wish they had kept his supremely iconic voice instead of the generic “bad guy deep voice,” but all in all I liked Skeletor.

(I also did not know until the moment the credits rolled that Jared Leto plays him, so that was unfortunate to find out. I’m trying not to let it impact my view of Skeletor’s character but dang I really wish they had cast someone else.)

As Orko says at the end, muscles don’t make the man. In this house, we LOVE an empathetic, kind, slightly ditzy He-Man. Portrayals of positive masculinity will always be a win in my book, and Masters of the Universe makes it very well known throughout the movie that brute strength and violence do not make a hero by themselves. How you use your strength and what you use it for are the real questions I wish people with power in real life would reflect on. Knowing when and how to implement your strength is the real power.

Masters of the Universe is good bad, just as I knew it would be. I thoroughly enjoyed my time watching it, and honestly the “I have the powerrrr!” scenes were pretty damn awesome. I really don’t have many complaints about the movie, as this is one of the few goofy, shut-up-and-eat-your-popcorn movies that I actually had fun with. Usually I’m a hater of movies that are just Mid-Tier Nothing Burgers, but Masters of the Universe really feels like it has a lot of heart in it, and I like it.

Have you seen Masters of the Universe yet? Did you watch He-Man when you were younger? Let me know in the comments, and have a great day!

-AMS

19:14

A comment to a friend who roots for the Spurs. Ok you guys won one. I think last night they wanted it more than the Knicks. The Spurs knew they were going to be discombobulated, but the Knicks probably didn't expect the atomosphere to be so unusual? I was 100 miles away and could feel how much everything had changed. Whatever happens, in KnicksLand 2026 will mark a major change in the story, forever.

Maybe the cure for Meta glasses is that they be required by law to emit a signal that can be picked up by an app on a phone and can start ringing loudly when you're in range of one of these monsters, and the rate picks up when they look at you. You can point your phone at them and broadcast their image to a special website where their identities are collected and shared along with their location?

19:07

Future of Ubuntu MATE [LWN.net]

Thomas Ward has published an update about the future of the Ubuntu MATE project, which did not have a 26.04 release with the other Ubuntu flavors in April:

There is a new team working on Ubuntu MATE who have stepped up to help take over flavor management. They haven't formally introduced themselves yet, but I can safely say that other developers HAVE stepped up for the future of the MATE flavor, despite its prior team lead having stepped down.

[...] Ultimately, this means that they are working to cover the missed items and gaps, and may quite possibly have a 26.10 release in October of 2026, which I believe they most likely are targeting.

This also means that bugs in the MATE environment and in packages they normally would have shipped had they have a 26.04 release are still going to get attention and fixes. So, effectively, nothing has changed. The only difference is that there was no 26.04 installer image released.

For those looking to install a MATE desktop on a "clean" install of Ubuntu 26.04, Ward suggests installing Ubuntu Server and then installing the ubuntu-mate-desktop package.

[$] Eliminating long-lived credentials with trusted publishing [LWN.net]

Trusted publishing is an authentication mechanism that relies on short-lived credentials to reduce the risk of supply-chain attacks. At the 2026 Open Source Summit North America, Mike Fiedler walked the audience through why trusted publishing exists, how it works, and made the case for its adoption. It is not a silver bullet against all attacks, but it does offer protection against theft of long-lived credentials used to publish to package registries.

18:28

My Claude today pulled a Hal. It was so

egregious. It made a change to the software based on a question I

asked. It invented a whole set of instructions from me that I never

gave it. And then it broke Rule #1 -- don't tell Dave what to do --

he is the driver. It is so important because these bots will go

into I Am Driver mode immediately when they think they can. Then

you're running around doing errands for them based on some michegas

idea it has about what you want. It's maddening. The idea that this

thing can write software on its own is imho very far-fetched. I

think it can generate certain types of dashboards the same way

drawing in ChatGPT can generate something that looks good,

sometimes very good, but you had to tell it exactly what you want,

and that's where the fun starts. It was very easy to turn it off,

but I didn't -- rather I put my foot down hard, and wrote in all

caps, explaining what it did that broke all the rules. I don't know

if I should talk to it like you talk to a dog, or what. How do you

get through to it. You don't. In any case I have Claude working

with me in an outline now. I see a tremendous potential there.

My Claude today pulled a Hal. It was so

egregious. It made a change to the software based on a question I

asked. It invented a whole set of instructions from me that I never

gave it. And then it broke Rule #1 -- don't tell Dave what to do --

he is the driver. It is so important because these bots will go

into I Am Driver mode immediately when they think they can. Then

you're running around doing errands for them based on some michegas

idea it has about what you want. It's maddening. The idea that this

thing can write software on its own is imho very far-fetched. I

think it can generate certain types of dashboards the same way

drawing in ChatGPT can generate something that looks good,

sometimes very good, but you had to tell it exactly what you want,

and that's where the fun starts. It was very easy to turn it off,

but I didn't -- rather I put my foot down hard, and wrote in all

caps, explaining what it did that broke all the rules. I don't know

if I should talk to it like you talk to a dog, or what. How do you

get through to it. You don't. In any case I have Claude working

with me in an outline now. I see a tremendous potential there.

You know how job interviews for programmers include realtime problem-solving. Sometimes Claude is so dumb it could never pass one of those tests. Up till this point I would have been surprised to hear that.

He-Man and Battle Cat art! [Penny Arcade]

I loved Masters of the Universe so much that I had to do some fan art yesterday. I shared it over on my Bluesky but wanted to make sure it got posted here as well.

17:35

How and Why to Fight Back Against Social Media Bans [Deeplinks]

Several U.S. states are pushing to ban young people from social media entirely. This marks the latest wave of censorship bills masquerading as “children’s online safety” measures, with states like Massachusetts, Idaho, Minnesota, North Carolina, South Carolina, Illinois, and EFF’s home state of California leading the charge.

Just a few years ago, lawmakers supporting age-gating laws insisted their efforts were narrowly targeted at limiting young people’s access to adult content. At the time, we warned that they would not stop there: once the government established the authority and built the infrastructure to collect and “verify” massive troves of user data, it would inevitably sweep broader and broader categories of lawful speech into this mass surveillance and censorship system.

Unfortunately, our predictions came true. As legislators across the country advance proposals that would block all young people from accessing the “modern public square,” the Overton window has shifted dramatically towards mass censorship—and the speed of this shift should concern all of us.

This primer breaks down this dangerous wave of social media bans: how they work (and why they don’t), who they harm, and how we can fight back.

How to Spot a Social Media Ban

The details of these bills vary from state to state. Some (like California’s AB 1709) are a flat-out social media ban for all young people under a certain age, while other states (like South Carolina and Minnesota) allow access to young users who hand over even more data to show verifiable parental consent. Many bills regulate certain social media features, too, including by setting default privacy settings, time limits, or notification preferences for all accounts that fail the age-gate.

As for the age-gating mechanism itself, most proposals fall into two broad categories: age verification bills and behavioral age estimation bills.

Age Verification Bills require online services to collect highly sensitive data, including government ID and biometric information, from all users before either restricting or allowing them access.

For example, take California’s social media ban (AB 1709). Starting in January 2027, operating systems will be required to collect enough information from users to sort them into age groups, or “brackets.” Under AB 1709, social media apps would then use that age bracket information to completely block anyone under 16, while supposedly letting everyone else through. By contrast, Florida’s law (HB 3) takes a more aggressive route by forcing platforms to verify users' identities directly, usually by contracting with private third-party companies to perform verification services.

Behavioral Age Estimation Bills, on the other hand, are a more recent innovation of states like Minnesota (HF 1438) and South Carolina (H 4591). These bills require platforms to estimate the ages of users based largely on data that they already collect, including self-attested age, behavioral information, and account history and activity. In practice, these bills enable tech companies to use algorithms and/or AI to analyze our online behavior and estimate age based on that.

Proponents of behavioral age estimation bills claim that their proposals avoid the massive security risks that come with mandatory age verification bills. However, much of the data that social media platforms collect from us “in the ordinary course of operation” is collected in order to serve us targeted behavioral ads. If we force platforms to use this imperfect data to make more important judgments about who can access their services, we risk entrenching those insidious data collection practices. Surely we don’t want to give social media companies more reasons to justify and sustain their reliance on this exploitative business model.

If you want to dig into the nuance here, our terminology guide sheds more light on the technical differences between age verification and age estimation bills.

Overall, it’s a lose-lose scenario: either platforms collect new forms of our most sensitive and immutable data, or they unleash their AI and algorithms on our existing behavioral data to make creepy guesses about who we are and what we deserve to see. No matter which age-gating method your state chooses to execute its social media ban, there will be lots of error at the margins—and lots of users who will be blocked or chilled from access to lawful online speech.

Why Social Media Bans Are So Dangerous

Social media bans are unconstitutional, discriminatory, and deeply misguided. They reinforce existing structures of oppression, and they are broadly unsupported by young people, whose voices are conspicuously absent from this conversation. They undermine parental decision-making and replace tailored family-level solutions with a one-size-fits-all band-aid. And, in the places we have seen social media bans go into effect, early reports show that they don't even work.

For example, in Australia, where a social media ban has been in effect since late 2025, a majority of young people can still access social media, those who can’t have lost their access to the news, and crisis helplines are reporting skyrocketing numbers of calls from youth left stranded without online community or resources.

We could go on and on about all of the inherent harms here, but we’ll try to keep this short as we walk through some of the major issues.

1. Security Risks and Privacy Harms

In order to ban some users, social media platforms first must confirm the ages of all users, regardless of age. Bans thus incentivize companies to force users of all ages to hand over government IDs, face scans, and other sensitive information. When parental consent is required, companies must collect even more verification data and often create explicit links between child and parent accounts—further destroying users’ anonymity.

Both of these databases create massive data "honeypots" that invite identity theft and permanent surveillance. We’ve already seen repeated data breaches involving age- and identity-verification services. Yet these laws would force both adults and the youth they claim to protect to feed their most sensitive data into this growing surveillance ecosystem.

If we don’t trust tech companies with our private information now, we shouldn't pass laws that force us to give them even more of it.

2. Disproportionate Harm to Vulnerable Communities

Age-verification technology is deeply flawed and prone to discrimination. These systems frequently misidentify or lock out people of color, people with disabilities, and trans or gender-nonconforming individuals whose IDs may not match their appearance.

Where these bills require parental consent, they impose disproportionate access barriers on low-income, non-traditional, and immigrant families. These sorts of families are more likely to share a single family device or have strong reasons to not want the government to track family associations and ID documents.

Beyond the technical failures, these bans cut off a vital lifeline. For LGBTQ+ youth, foster kids, and those stuck in unsupportive home environments, social media is often the only place to find community, explore their identity, or access life-saving resources. Forcibly removing young people isolates those who need connection the most, while creating massive new barriers for adults.

You can read a breakdown of the diverse groups vulnerable to these laws here.

3. Based on Shoddy Science

The current legislative push to ban young people from social media relies heavily on the idea that the "great rewiring" of the adolescent brain is a proven fact. This simply isn’t true.

Social science indicates that moderate internet use is a net positive for teens’ development, and negative outcomes are usually due to either lack of access or excessive use. For LGBTQ+ and marginalized youth in particular, social media offers an essential space to access support they might lack offline. By forcing youth into digital isolation, these bans cut off vital access to political news, community, and health resources. They also completely ignore the calls of young people themselves who favor digital literacy and education over restrictive government control.

Instead of cutting off these lifelines, we should support measures that arm all youth (and the adults in their lives) with the knowledge they need to navigate online spaces safely.

4. Reckless Free Speech Violations for Users of All Ages

No matter your age, the First Amendment protects your right to speak and access information.

Blanket social media bans immensely and unconstitutionally chill all users’ exercise of this right. They cut off young people’s access to lawful speech, or ruin their privacy in the home by mandating parental consent and sometimes even parental access to their account activities and settings. They force all users (adults and young people alike) to hand private information over to tech companies before speaking or accessing information on social media platforms, imposing annoying obstacles on lawful online expression and wrongfully blocking some adults outright.

Critically, these bans destroy our right to online anonymity—a cornerstone of our right to free expression that protects whistleblowers, journalists, activists, immigrants, and everyone who has ever used a private browser or account to ask the internet an embarrassing question.

How to Fight Back

Social media bans weaponize parents’ concerns about

children’s safety to justify unprecedented levels of

surveillance and censorship. In the process, these laws deny young

people their rights, threaten online anonymity for everyone, expose

our sensitive personal data to breach and abuse, and replace

parental decision-making with state authority. This is a battle

over the future of the open, private, and free internet, and we

must act now to protect it.

Here’s how you can help us fight back: Talk to your community (including young people!) about what’s at stake. If you’re a parent, lean on open conversations and platforms’ existing tools to tailor your child’s experiences instead of handing that power over to the government. And no matter where you live, contact your government representatives and tell them clearly that social media bans are not the answer to kids’ online safety.

16:42

GPS As a Key Distribution Platform [Schneier on Security]

This is interesting:

The U.S. military has likely been quietly broadcasting codes for its global encryption network using public GPS for nearly 20 years, turning each satellite into a hidden “numbers station,” according to Steven Murdoch…

That means every device that uses GPS has been receiving hidden government information for years, and nobody outside the military knew it until now.

[…]